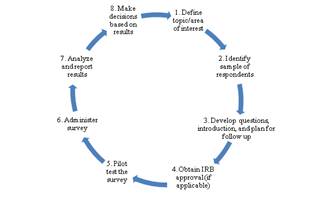

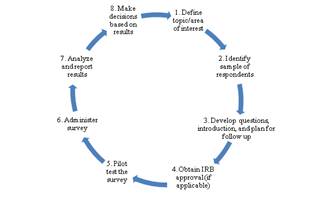

Best Practices for Conducting Surveys

These guidelines are designed to help offices that need to gather survey responses from students as part of their unit's responsibilities. Student email addresses provide a convenient, low-cost way to inform students about on-line surveys, but care needs to be taken to ensure that students are not overwhelmed by the volume of requests or bothered by inappropriate surveys. "Survey fatigue" will result in lower response rates and students will become less vigilant about checking their email boxes. In that spirit, the following guidelines are suggested:

You will obtain higher response rates if you develop a survey that is concise. Create a list of issues, questions, ideas, presumptions, etc. that you want to gain insight into. Ensure that this information is not available from other sources before beginning the survey process.

You typically do not have to survey all respondents but can survey just a sample of potential respondents. Your sample should be specific, for example, when surveying students, target only to the relevant group of students not all students. Sample only as many students as needed to ensure a reasonable sampling error. This will also help control the number of times students are asked to complete surveys. Also, students are more likely to respond when they understand the relevance and importance of the survey questions to them. In addition, it is important to minimize the number of surveys that any individual student might be asked to complete. This will help ensure that response rates do not drop to unacceptable levels for all surveys and that students do not view their official email address as a source of unwanted and unneeded messages.

The main suggested guideline in developing question items is to keep in mind what kind of information each and every question will give you. If you cannot come up with a concrete answer, you should drop the item. Generally, you should not keep question items in your survey simply because you think they might come in handy at some point. Other suggested guidelines include:

- Aim for brevity and simplicity.

- Do your homework. It is always good practice to use previous literature and/or an online search or previously published survey to assist you in this development stage.

- Avoid complex wording or structure - compound sentences, complex vocabulary words, unusual terminology, idiomatic expressions - all these things will confuse some people and make your data less precise. Use simple sentences and vocabulary appropriate to your audience. This is especially important if any portion of your respondents might not be native English speakers (or native speakers of the language your survey is written in).

- Avoid vague or overly general questions - Are any of your questions so broad that they will not give you specific, actionable information? "Overall satisfaction" type questions often fall into this category. If you ask yourself what you will learn from the responses, positive or negative, from each question, you will know whether they are too vague.

- Avoid items that could be misinterpreted - For each survey item, ask yourself whether there are any ways in which it might be misinterpreted. Many words have different meanings to different people. References to imprecise concepts such as time and distance are subjective.

- Questions with a "right" answer - It is an easy and common mistake to write items that have a socially "correct" or desirable answer. Also be sure you do not lead your respondents to answer in a particular way by making them think you "want" them to provide a certain response. Your items need to be presented neutrally.

- Double-Barreled Items - These are items that ask about more than one thing. If you see the word "and" or "or" in your item, chances are it is double-barreled. Data from items like this are useless because you have no way of knowing what part of the question each respondent was thinking of when responding.

- Once you have your questions, plan for follow up by determining how and when you will follow up with potential respondents to remind them to complete the survey. (One to two follow-up reminders are recommended. Three or more reminders generally do not improve response rates.)

IRB approval must be on file prior to survey distribution.

Test the survey with your development group (i.e., for a student survey, include students in the pilot test, for a faculty survey include faculty, etc.) The purpose of pilot testing is to obtain feedback regarding wording, structure, format, etc. of the survey. During pilot testing, you should ask respondents to complete the survey and time how long it took them. Feedback from pilot testers will enable you to modify the survey prior to distributing it to a larger audience. As part of this process, it is good practice to test the online survey and underlying data prior to opening the survey to ensure it works and flows correctly.

Consult with IR to ensure that your survey does not conflict with the distribution of university-sponsored surveys. By coordinating efforts with IR, we can ensure that respondents receive a reasonable number of surveys and that the survey timelines do not overlap. The survey invitation should include the following text indicates:

- Survey title

- Who is conducting the survey

- Short description of the purpose and proposed use of the survey

- How results will be used and whether they are confidential

- Whom to contact with questions

- Closing statement

should be shared with the appropriate constituents. Note that in general, you do not want to analyze or report results in such a manner that individual respondents can be identified. For example, removing names and titles from responses. It is most useful to summarize survey responses from all of the people who completed the survey.

based on results.

NOTE: If you would like to request additional survey research support, you may contact the Survey Research and Assessment Team in the Office of Institutional Effectiveness & Analytics (IEA) at ir@csustan.edu. IEA provides a full-service survey facility and our services include but are not limited to, study and instrument design, sampling procedures, data collection, survey administration, data analysis, and report writing.

Updated: February 13, 2025